With AI agents, it’s worth asking the question the right way: “Why is the CPU becoming important again?” Because an agent is usually not a workload that runs one giant model back-to-back. It’s an “operating layer” that stays on, makes lots of small decisions in real time, talks to external systems, and runs multiple tasks at once. The model itself can sometimes be accelerated by a GPU/NPU; but a large share of what an agent does all day naturally falls into areas where the CPU is strong: orchestration, I/O, networking, memory management, multi-process / multi-thread scheduling, security boundaries, and the “control plane” that coordinates everything.

In agent workloads, performance is often determined less by raw CPU speed and more by “system” components such as RAM capacity/bandwidth, SSD access, and network latency. That’s why the practical investment thesis is often less about the CPU in isolation and more about the platform architecture around the CPU (memory + I/O).

I’ll keep the point simple in this piece: Agents “like” CPUs for two reasons: (1) reliable, low-latency control and (2) data locality and security. Then I’ll map that into an investment lens for AMD, Arm, and IBM. I’ll also use OpenClaw as a concrete example of why people want to run agents on their own hardware (like Mac minis).

What do agents do technically and why is the CPU critical?

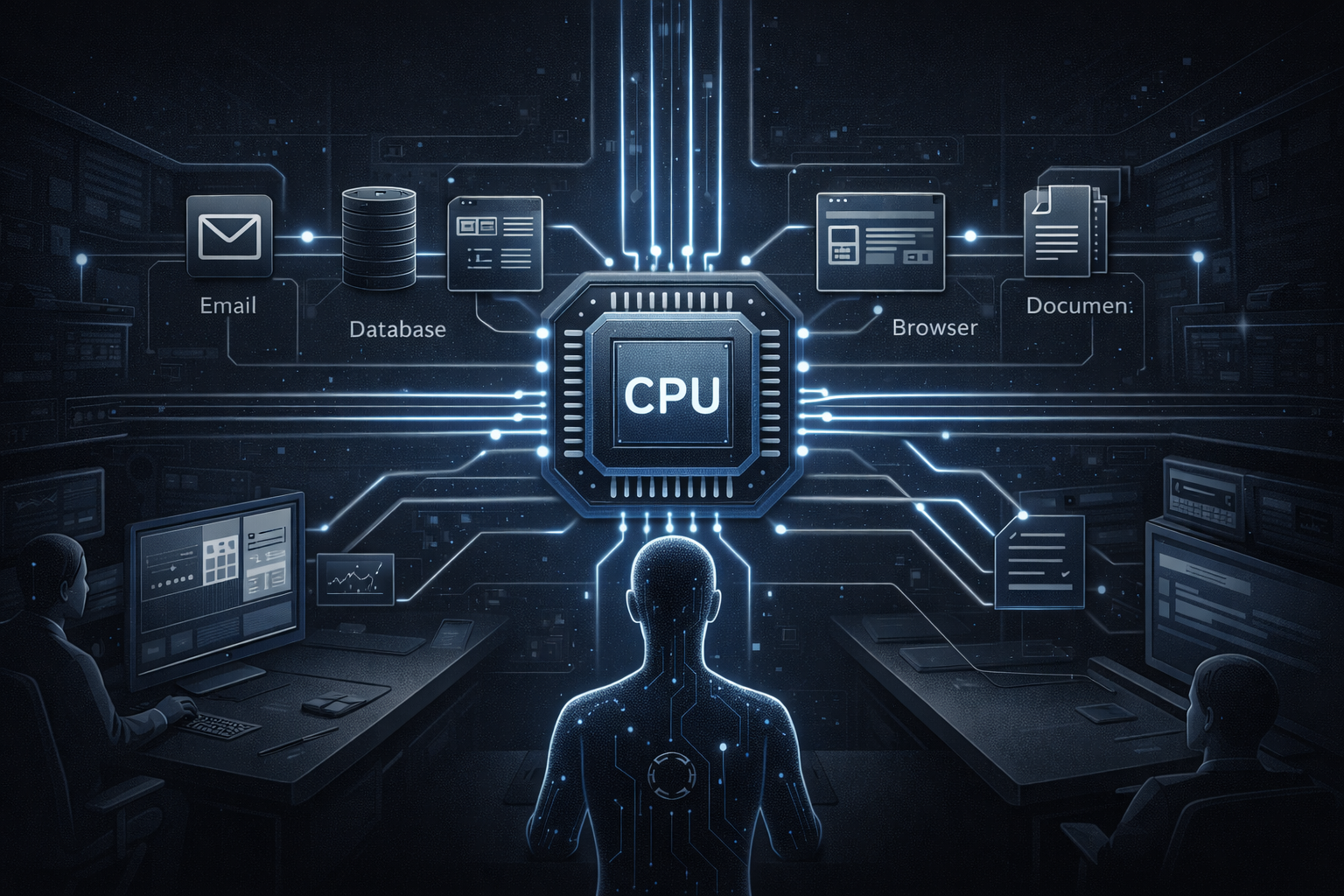

In classic chatbot usage, you send a request, the model responds, and the interaction ends. Agent workflows are different: an agent takes a goal (e.g., “categorize this week’s invoices”), then proceeds step by step planning, calling tools (email, browser, file system, CRM), checking results, looping back when needed, logging actions, and often doing this across multiple tasks in parallel.

Technically, that turns into a few dominant patterns:

Lots of small model calls / lots of small operations: Each step may not be a heavy “matrix multiplication.” But hundreds of small decisions, routing actions, filtering, and data-prep tasks add up. That’s CPU territory. Also, a meaningful portion of agent work is not “running a huge model,” but glue steps like tool-calling, data preparation/parsing, rule checks, error handling, and routing. These typically run on the CPU and as latency accumulates, they end up defining the user experience.

I/O and integration become dominant: Agents create real-world value by calling APIs, querying databases, reading files, and automating browsers. These are less GPU problems and more CPU + network + memory problems plus a good scheduler.

Concurrency and task contention: An agent is not “one inference.” It runs multiple tasks in parallel, which makes thread/process management and resource sharing a real problem. Academic work also points out that coordinating concurrent/conflicting demands in agentic workloads is its own optimization challenge.

A simple intuition helps: the GPU (or NPU) accelerates “compute”; the CPU runs the “system.” Agents are system-heavy. That’s why talking only about GPUs when people say “the year of agents” misses a big part of the picture.

Why do people run agents on their own hardware (like Mac minis)?

One reason OpenClaw spread quickly is the idea that individuals or small teams can run an agent on their own hardware 24/7. Mac minis became popular for that: quiet, always-on friendly, relatively affordable, and a natural fit for home/office setups. One developer write-up highlights that the appeal of running OpenClaw on your own hardware (e.g., a Mac mini) is control over data.

Three motivations stand out:

Data security / data locality: Email, calendars, customer documents, API keys… these are where agents are valuable, and also where they are risky. There have also been security reports and warnings suggesting that OpenClaw installations can carry configuration/data leakage risks. That pushes users toward the instinct of “don’t send everything to the cloud at least keep control in-house.”

Speed / latency: Agents move in small steps, so latency is felt more sharply. Instead of round-tripping to the cloud for every step, doing as much as possible locally improves the experience. Local run guides often emphasize the “always-on, low-latency” motivation.

Here, the point is not just the CPU: Apple Silicon’s SoC design (CPU + GPU + Neural Engine in one package) can be efficient in low-power, always-on scenarios.

But “running locally” does not automatically mean “secure.” Agents can still create risk through API keys, file permissions, and browser sessions. In practice, what matters is maintaining least privilege, sandboxing, and disciplined secret management locally as well.

So “Mac mini + agent” isn’t just a hobby trend it’s an early behavior shift that can pull demand toward CPUs/platforms: people want to do part of inference locally and keep an agent running continuously.

How does this thesis map to CPU-related companies?

AMD: x86 CPUs and the chance to own the “local agent” narrative

For AMD, the agent trend can work through two channels:

PC/edge: AMD has been explicit about open-source and software-layer investments to make local model/agent workloads easier on Ryzen AI (projects like GAIA and Lemonade). The message is straightforward: local LLM/agent → lower latency + more privacy + on-device optimization.

The CPU’s role: Because the “coordinating” side of agents runs on the CPU, Ryzen-class CPUs with high core counts, strong single-thread performance, and solid memory bandwidth can become more visible in categories like local agent boxes (developer machines, small office servers, always-on mini workstations).

The key investor point: as agent workloads grow, “I’ll just buy a GPU” becomes insufficient CPU + memory + I/O balance matters. If AMD can position the agent narrative around the full system (not only the NPU), it can help the market price the “AI PC” wave as a platform story rather than a single component story.

Arm: doesn’t sell CPUs directly, but benefits via architecture/royalties

Arm may not look like a CPU manufacturer, but it sets the standard for CPU architectures and collects royalties at ecosystem scale. In an agent world, two advantages stand out:

Energy efficiency and ubiquity: Agents tend to be always-on and embedded into devices. Arm is already the natural architecture in edge/IoT and mobile. Arm’s messaging around edge AI emphasizes real-time decision-making and efficiency.

CPU inference and orchestration: Arm positions the CPU’s role in inference and AI workflows through broad framework/OS compatibility and distribution scale.

As an investment category, Arm becomes a “picks-and-shovels” / royalty way to play “agent compute”: as agents move down to the edge and device counts rise, Arm’s volume/royalty dynamics can strengthen (and if data center Arm continues to expand, the story gets even broader).

IBM: enterprise “AI in place” and security-driven CPU demand

What makes IBM interesting in the agent/CPU discussion is that enterprise buyers often have stronger “keep the data where it is” requirements. And when we say “IBM CPU” here, it’s more precise to say IBM’s IBM Z (mainframe) and Power processor ecosystems infrastructure designed for mission-critical workloads where data locality and security constraints matter.

In IBM’s own product framing:

- On the IBM Z side, Telum emphasizes “on-chip AI acceleration,” aiming to run certain AI inferences without extra hardware while keeping data on the system.

- On the Power side, IBM’s Power11 messaging includes “enterprise IT / AI-native workloads” language and references components like the Spyre Accelerator.

Through an agent lens, IBM’s potential benefit looks like this: as large institutions deploy agents into production, they will care about “customer data shouldn’t leave, audits must be local, latency must be predictable.” IBM’s mainframe/Power value proposition has long been “mission-critical + security + data in place.” If agents reinforce that, IBM’s CPU/accelerator story can tie less to generic AI hype and more to enterprise-grade, reliable execution.

Closing: If 2026 is the year of agents, is it also the year of CPUs?

My read: if 2026 really is the “year of agents,” it does not imply that everything outside the GPU becomes irrelevant if anything, the opposite. As agents start producing real-world value, the bottleneck shifts from pure model compute to system execution. That pulls the CPU (and the memory, storage, and networking architecture around it) back toward the center of the stack.

Projects like OpenClaw being run on Mac minis with an “always-on, my data stays with me” motivation are an early signal of that behavior shift. On AMD’s side, software investments to support local agent/LLM stacks show the CPU isn’t just a side character in this evolution. Arm can benefit as agents spread to edge devices through a royalty-based “infrastructure” dynamic. And in the enterprise, agents going into production can make “AI in place + data in place” even more valuable, which is where IBM Z and Power are naturally positioned.

If this is going to be the foundation of a new investment thesis, the next step is to stop putting CPU-related names into one “AI compute” bucket and instead segment them by three agent-driven needs:

(1) local/edge always-on stations,

(2) enterprise data residency + audit infrastructure, and

(3) ecosystem/royalty scale.

.png)